Truth is now used as a way to make businesses grow.

For 15 years, the attention economy attacked reality, fueled by AI. But truth is now fighting back and winning, becoming a business growth strategy.

For the past fifteen years, the biggest corporations in the world have built their empires around one idea: your attention is the ultimate product. The truth was never their main concern. It was all about engagement.

The algorithms created by social media platforms were not designed to prioritize what was real. Instead, they were focused on what would grab your attention and keep you hooked. Accuracy was overshadowed by outrage, fear took precedence over fact, and the quick fix of confirmation overpowered the hard work of actually understanding something.

This wasn't a secret plot, it was simply their business model. And it worked, until it didn't. But now, a new, more perilous threat has emerged.

Artificial intelligence has made it incredibly easy and cheap to produce convincing content. With just a subscription and a prompt, a single person can generate a video of a world leader saying something they never actually said, complete with their voice and mannerisms. The infrastructure for deception has been made available to anyone.

Yet, the infrastructure for verification has not caught up. This is a scenario that George Orwell warned us about in his famous book, 1984. The most dangerous weapon of the Ministry of Truth was not the lie itself, but the systematic destruction of the citizens' ability to trust their own perception of reality.

When everything is propaganda, it becomes impossible to know what is real. And when that happens, people stop trying. They retreat into their own tribe's shared narrative and call it the truth.

The challenge for all of us now is to sift through the overwhelming noise and find the truth. As the saying goes, "truth is treason in an empire of lies." Unfortunately, this is no longer a fictional dystopia, but a description of our current global information environment, amplified by social media and now turbocharged by AI. The algorithms were never optimized for what is true, but rather for what is stimulating.

And most of us never bothered to read the fine print. The scale of this problem is not just a matter of rhetoric, it is measurable. The World Economic Forum has listed AI-generated disinformation as the number one global risk for two consecutive years.

Studies have shown that false stories spread six times faster on social media than true ones, reaching ten times more people. However, this is not solely due to algorithms promoting false information. In fact, it is humans who are responsible for sharing what is most likely to outrage, surprise, or confirm their beliefs.

And statistically, the truth does not always evoke these reactions as easily as a well-crafted lie. Meanwhile, authoritarian leaders from around the world have perfected the use of propaganda to manipulate their citizens. Their goal is not just to make people believe their lies, but to make them unable to believe anything at all.

This is known as "epistemological exhaustion" - a population so bombarded with conflicting narratives that they simply give up trying to make sense of reality and instead cling to the familiar one of their tribe. And AI is the perfect tool for achieving this state. But what makes the early months of 2026 a pivotal moment in this story is the fact that a $200 million government contract was given to an AI company.

The Pentagon even demanded that the company remove two restrictions: no mass domestic surveillance of American citizens and no fully autonomous weapons. However, the company refused to comply. The government threatened to label them a national security risk, and the president ordered all federal agencies to stop using their products.

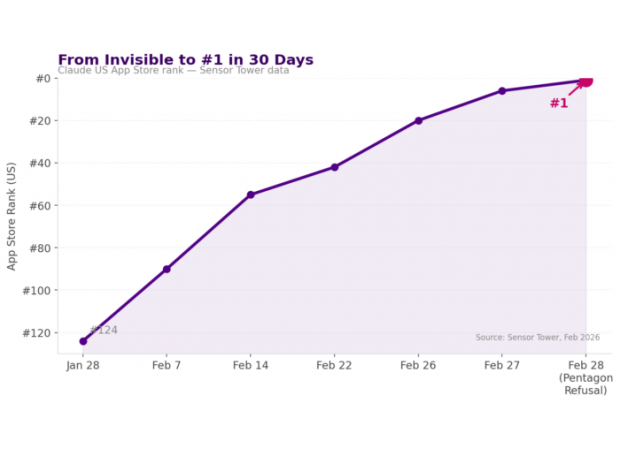

But the company stood their ground. In a public statement, CEO Dario Amodei simply said, "We cannot in good conscience accede to their request." And within 24 hours, something remarkable happened. The company's app, called Claude, climbed from 42nd to the number one spot in the Apple App Store.

And the download numbers were even more impressive when compared to their competition. Consumer sentiment towards ChatGPT, another AI app, didn't just soften, it exploded. Uninstalls of ChatGPT increased by 295% in a single day, from a normal daily churn rate of 9% to a full-blown consumer revolt.

People took to social media to express their dissatisfaction and the QuitGPT movement gained 1.5 million actions within five days. One-star reviews spiked by 775% in just 24 hours, while five-star reviews dropped by 50% in the same period. It was a stunning turn of events, with the company losing a $200 million government contract, yet gaining more brand equity in 48 hours than most companies do in a decade.

For the past fifteen years, the biggest corporations in the world have been thriving on one simple concept: Your attention is the product. The truth was never the focus, it was all about engagement. The algorithms created by social media platforms were not designed to prioritize what was real, but rather what was stimulating.

Emotions like outrage and fear were valued over factual information. People were addicted to the instant gratification of having their beliefs confirmed, rather than putting in the effort to truly understand something. This wasn't some sort of secret conspiracy, it was just a clever business model that worked incredibly well, until it didn't.

But now, we face an even more dangerous threat. With the rise of artificial intelligence, the cost of creating convincing content has dropped significantly. With just a subscription and a prompt, one can produce a photorealistic video of a world leader saying things they never actually said, in their own voice and with their mannerisms, all in a matter of seconds.

The infrastructure for spreading deception has become accessible to the masses, while the infrastructure for verifying the truth has not. In his renowned book 1984, George Orwell warned us about this exact scenario. The Ministry of Truth's most powerful weapon was not the lie itself, but rather the systematic destruction of citizens' ability to trust their own perception of reality.

When everything is propaganda, it becomes impossible to know what is true. And when people stop trying to discern the truth, they retreat into their own tribe's narrative, convinced that it is the ultimate truth. In this chaotic information environment, our greatest challenge is to find the truth amidst all the noise.

As the saying goes, "Truth is treason in an empire of lies." This once fictional dystopia is now a reality, amplified on a global scale by social media and turbocharged by AI. And the scary truth is, the algorithms were never optimized for what was real, but rather for what was stimulating. Most of us never bothered to read the fine print.

The numbers behind this problem are not just words, they are tangible and measurable. The World Economic Forum has identified AI-generated disinformation as the number one global risk for two years in a row. Studies have shown that false stories spread six times faster on social media than true ones, reaching ten times more people.

And the reason for this is not because the algorithms themselves promote false information, but because humans are more likely to share things that shock, surprise, or confirm their beliefs. In a world where emotions reign supreme, the truth often gets lost in the chaos. Meanwhile, authoritarian regimes around the world have perfected the art of spreading propaganda.

But their goal is no longer just to make people believe their lies, it is to make them doubt everything. This is known as "epistemological exhaustion," where the population is bombarded with so many conflicting narratives that they simply give up trying to navigate reality and instead choose the one that feels most familiar. And with AI at their disposal, this goal has become even more achievable.

But in early 2026, something monumental happened. A company known as Claude was awarded a $200 million government contract. The Pentagon, in an unprecedented move, demanded that the company remove two restrictions: no mass domestic surveillance of American citizens and no fully autonomous weapons.

But Claude refused to comply, even when threatened with being labeled a national security risk. In response, the president ordered all federal agencies to stop using their products. And CEO Dario Amodei made a powerful statement, simply saying, "We cannot in good conscience accede to their request." The impact of this decision was immediate and astounding.

Within 24 hours, Claude's app became the number one downloaded app in the Apple App Store, surpassing even ChatGPT, the leading AI-generated content app. And it wasn't just about the numbers, consumer sentiment towards ChatGPT completely changed. People were outraged and took action, resulting in a 295% increase in uninstalls and a massive spike in negative reviews.

In just 48 hours, Claude had generated more brand equity than most companies do in a decade. This pivotal moment in our history has shown us the power of standing up for what is right, even when it means going against the norm. And as we continue to navigate this complex and ever-changing information landscape, it is up to all of us to demand the truth and refuse to settle for anything less.

Because in a world where deception is rampant and propaganda is the norm, the only way to find truth is to actively seek it out and never stop questioning.